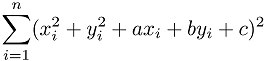

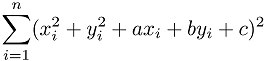

Consider a set of n points (x1, y1), ..., (xn,yn) on a Cartesian space. Your task is to write a program for regression to a circle x2 + y2 + ax + by + c = 0. In other words, your program should find a circle that minimizes the error. Here the error is measured by the sum over square distances between each point and the circle, which is given by:

The input begins with a line containing one integer n (3 <= n <= 40,000). Then n lines follow. The i-th line contains two integers xi and yi (0 <= xi, yi <= 1,000), which denote the coordinates of the i-th point.

You can assume there are no cases in which all the points lie on a straight line.

Print three integers a, b and c, separated by space, to show the regressed function. The output values should be in a decimal fraction and should not contain an error greater than 0.001.

4 0 0 100 0 100 100 0 100

-100.000 -100.000 0.000

3 0 0 10 0 5 100

-10.000 -99.750 0.000